Progress over the last ten years in areas of AI research, such as natural language processing and computer vision has been stunning, but it was the release in 2020 of massive models like the “Generative Pre-trained Transformer 3” (GPT-3), that demonstrated a fundamental shift in capabilities of artificial neural networks. GPT-3 was so good a generating text that Farhad Manjoo of the New York Times wrote that it was “more than a little terrifying”. It is not open source and Microsoft now controls access to it, but it’s developers at OpenAI have been busy using GPT-3 as a foundational model for some very interesting variations. One example is training using text and images rather than just text. The result has been DALL-E and descendants which can produce photo-realistic images from simple text descriptions. This is clearly a major leap from translating English to passible French.

Setting aside the novelty of the images, we must keep in mind that system was trained on very large databases of actual images. Are these images appearing in DALL-E original creations? Is this not like the illegal practice of sampling in music? (It is illegal if the original images are copyrighted, and no permission has been given. )

If we can train on text-image pairs, why not text-code pairs? OpenAI used the content of 54 million GIthub repositories to retrain GPT-3 to create Codex: a text to python translator. OpenAI published an analysis of codex which claimed it solves 28.8% of the problems on the HumeEval benchmark. Working with GitHub they created GitHub Copilot, a tool that can be integrated with Visual Studio Code that we will discuss below.

Shortly after the release of Copilot, GitHub came under attack for precisely the copyright issues mentioned above. The software freedom conservancy said it was giving up GitHub because of the code license issue. In an article from Venturebeat.com detailed additional controversy surrounding codex and Copilot. More specifically, it has been observed that codex/Copilot can occasionally generate code that is not only unsafe but even racially biased.

The goal of this short blog is not to evaluate any of the legal or moral arguments, but to look at the technology and come to a few conclusions concerning its value to programmers.

Installing and using Copilot with Visual Studio Code is easy and explained here. It is free to use if you are a student, teacher, or open source maintainer. Otherwise, you will need a subscription.

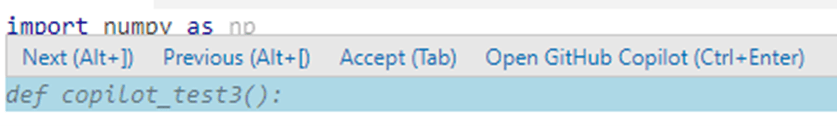

Once installed, If you open Visual Studio Code to a blank python file, you will find copilot almost too eager to start work. Starting with a file named copilot_test3.py and inserting the line “import numpy as np”, Copilot immediately had a suggestion for the next line as shown below. Hovering over this proposed line produces the alternative actions: next suggestion, previous suggestion or accept.

Hitting return will reject the suggestion. Typing “A =” resulted in the suggestion below appearing.

A = np.array([[1, 2, 3], [4, 5, 6], [7, 8, 9]])

Which was acceptable. We decided to look at Copilot’s knowledge of linear algebra. To experiment with extracting eigenvectors and eigenvalues we converted A to a symmetric matrix. We entered A=A*A.T and a comment suggesting the eigenvalue computation. Copilot immediately suggested the correct function call.

A = A*A.T

#Compute eigenvalues and eigenvectors of A

eigvals, eigvecs = np.linalg.eig(A)

Perhaps one of the most useful features of Copilot is its ability to use a comment string to conjure the correct library function. In some cases, this may involve a sequence of operations. A good example is converting our eigenvectors to a pandas dataframe and saving that to a csv file. This can be accomplished with one comment and Copilot fills in the rest.

#Save eigenvectors as a pandas dataframe and save as csv

import pandas as pd

df = pd.DataFrame(eigvecs)

df.to_csv(‘eigvecs.csv’)

Recovering the eigenvectors is also easily accomplished with a single comment.

#load eigenvectors from csv

df = pd.read_csv(‘eigvecs.csv’)

eigvecs = df.values[:,1:]

These translations can be thought as copilot demonstrating the classic idioms of Python library invocations. They are clearly useful.

Letting Copilot take over the Controls.

For a much more interesting test let’s see what Copilot knows about methods to solve linear algebra problems. We asked Copilot to solve Ax = b by the classical conjugate gradient method. The comment below caused Copilot to produce a perfect rendition of the method.

#Now to solve Ax = b by the conjugate gradient method.

def solve (A, b, x0, tol, max_iter):

x = x0

r = b – np.dot(A, x)

p = r

rsold = np.dot(r.T, r)

for i in range(max_iter):

Ap = np.dot(A, p)

alpha = rsold / np.dot(p.T, Ap)

x = x + alpha * p

r = r – alpha * Ap

rsnew = np.dot(r.T, r)

if np.sqrt(rsnew) < tol:

break

p = r + (rsnew / rsold) * p

rsold = rsnew

return x

Is this an example of copilot plagiarizing a GitHub repository? Possibly, but this algorithm is in every numerical algorithms book and has been so for 50 years.

Let’s make this more challenging. In the case when A is a simple symmetric tridiagonal matrix, we can use a more efficient version. Let’s change the comment as follows.

#Let A be a tridiagonal matrix defined by main diagonal vector D

#And off diagonal vector E.

# Solving Ax = b by the conjugate gradient method is in this case:

def solve_tridiagonal(D, E, b, x0, tol, max_iter):

x = x0

r = b – np.dot(D, x) – np.dot(E, x[1:]) – np.dot(E, x[:-1])

p = r

rsold = np.dot(r.T, r)

for i in range(max_iter):

Ap = np.dot(D, p) + np.dot(E, p[1:]) + np.dot(E, p[:-1])

alpha = rsold / np.dot(p.T, Ap)

x = x + alpha * p

r = r – alpha * Ap

rsnew = np.dot(r.T, r)

if np.sqrt(rsnew) < tol:

break

p = r + (rsnew / rsold) * p

rsold = rsnew

return x

This is wonderfully creative, and, at first glance, it looks perfect. It is clearly not taken from a GitHub repo. We know that because it is wrong. The error is in the computation of b – Ax by the expression b- np.dot(D, x) – np.dot(E, x[1:]) – np.dot(E, x[:-1]). While np.dot(A, x) is vector, np.dot(D, x) is a scalar and we need a vector. The correct computation is

r = D*x

r[1:] += E*x[:-1]

r[:-1] += E*x[1:]

r = b – r

However, the error occurs in two places. After commenting out the offending line and inserting the code above, we went to the second case and commented out that line. Copilot immediately inserted the corrected version.

#Ap = np.dot(D, p) + np.dot(E, p[1:]) + np.dot(E, p[:-1])

Ap = D*p

Ap[1:] += E*p[:-1]

Ap[:-1] += E*p[1:]

In this case, Copilot learned to make the needed correction based on the changes in the first case.

Copilot and Deep Neural Nets

While the examples above illustrate copilot’s proficiency with Python numerical libraries, we decided to push it a bit harder. In a recent post we looked at denoising autoencoders designed to remove noise from one-dimensional signals. After a non-trivial effort, we built an autoencoder based on 1-D convolutions that worked very well. Now asking copilot to do the same, we prompted it with a comment line. After a pause, it produced the following.

import torch

import torch.nn as nn

#Class of a one-dimensional convolutional denoising autoencoder

class ConvDenoisingAutoencoder(nn.Module):

def __init__(self, input_dim, hidden_dim, output_dim):

super(ConvDenoisingAutoencoder, self).__init__()

self.encoder = nn.Sequential(

nn.Conv1d(1, 16, 3, stride=3, padding=1),

nn.ReLU(True),

nn.MaxPool1d(2, stride=2),

nn.Conv1d(16, 8, 3, stride=2, padding=1),

nn.ReLU(True),

nn.MaxPool1d(2, stride=1)

)

self.decoder = nn.Sequential(

nn.ConvTranspose1d(8, 16, 3, stride=2),

nn.ReLU(True),

nn.ConvTranspose1d(16, 8, 5, stride=3, padding=1),

nn.ReLU(True),

nn.ConvTranspose1d(8, 1, 2, stride=2, padding=1),

nn.ReLU(True)

)

def forward(self, x):

x = self.encoder(x)

x = self.decoder(x)

return x

At first glance, this code is obviously flawed. The parameters input_dim, hidden_dim and output_dim are unused. However, the rest of code is basically correct. This was surprising given that the __init__ signature was wrong. There is one problem with this code. The autoencoder fails if the input does not have shape [*,1,124*(4**k) ], for k = 0,1,2… This is not obvious, and it took some careful analysis of the code to realize this fact. We tested the class with some of the same data described in the previous post. While not as complete as the version we created, it was adequate. We suspect that the code is based on a pattern synthesized from many examples in GitHub and the input shape detail was explained in one of those. We have put a Jupyter notebook demonstrating the working code in github.

Final Thoughts

If we set aside the legal and moral arguments and ask two questions:

- Is Copilot a helpful programmers assistant?

- How much should one trust Copilot suggestions?

To answer these, one must start by understanding that Copilot is not demonstrating human Intelligence. Its responses are more closely related to synthetic art than science. The genius of GTP-3 is its ability to take a prompt and weave an impressive surreal story. But it is not art. Beautiful patterns in nature can often thrill and inspire us, but they are not human created art. In the same way, GPT-3 takes natural language input and responds with text that reflects amazing combinations of human patterns of speech. In the same way, Copilot takes natural language input and maps it to software idioms and patterns that mimic the human creativity embedded in the GitHub “literature”.

Is Copilot a helpful programmers assistant? Yes, especially in the cases where you can’t remember the precise library function incantation to accomplish something simple. On the other hand, it can be a distraction when you know what you are doing and do not want a suggestion.

Copilot is always eager to “take the ball and run with it”. This can be fun to watch, but as we have illustrated the results are best appreciated as synthetic art or poetry. The generated code can look very good, but, as we have shown, it can contain subtle errors. Of course, this is a purely subjective evaluation. As previously mentioned, OpenAI has published a detailed study of their Codex model that was used to build Copilot. In this case they report 28.8% success rate in generating correct code from docstrings. I am not sure I would trust a programmer with a 28.8% success rate with my big projects. But it may be fine as an assistant with small library tasks. And this may be exactly what its creators intend.