In part 1 of this series, we looked at Microsoft Azure Batch and how it can be used to run MPI parallel programs. In this second part we describe Amazon Web Services Batch service and how to use it to schedule MPI jobs using the AWS parallelcluster command pcluster create. We conclude with a brief summary comparing AWS Batch to Azure Batch.

AWS Batch

Batch is designed to execute directed acyclic graphs (DAGs) of jobs where each job is a shell script, a Linux executable, or a Docker container image. The Batch service consist of 5 components.

- The Compute Environment which describes the compute resources that you want to make available to your executing jobs. The compute environment can be managed, which means that AWS scaled and configures instances for you, or it can be unmanaged where you control the resource allocation. There are two ways to provision resources. One is AWS Fargate (the AWS serverless infrastructure build for running containers) or on-demand EC2 or spot instances. You must also specify networking and subnets.

- The Batch schedular decides when and where to run your jobs using the available resources in your compute environment.

- Job queues are where you submit your jobs. The scheduler pulls jobs from the queues and schedules them to run on a compute environment. A queue is a named object that is associated with your compute environment. You can have multiple queues and there is a priority associated with each that indicates importance to the scheduler. Jobs in the higher priority queues get scheduled before lower priority queues.

- Job definitions are templates that define a class of job. When creating a job definition, you first specify if this is a Fargate job or ec2. You also specify how may retries in case the job fails and the container image that should be run for the job. ( You can also create a job definition for a multi-node parallel program, but in this case it must be ec2 based and it does not use a docker container. We discuss this in more detail below when we discuss MPI) Other details like memory requirements and virtual cpu count are specified in the job definition.

- Jobs are the specific instances that are submitted to the queues. To define a job, it must have a name, the name of the queue you want for it, the job definition template, the command string that the container needs to execute, and any dependencies. Job dependences, in the simplest form are just lists of the job IDs of the jobs in the workflow graph that must complete before this job is runnable.

To illustrate Batch we will run through a simple example consisting of three job. The first job does some trivial computation and then it writes a file to AWS S3. The other two jobs depend on the first job. When the first job is finished, the second and third are ready to run. Each of the subsequent jobs waits then wait for the file to appear in S3. When it is there, they read it, modify the content and write the result to a new file. In the end, there are now three files in S3.

The entire process of creating all of the Batch components and running the jobs can be accomplished by means of the AWS Boto3 python interface or it can be done with the Batch portal. We will take a mixed approach. We will use the portal to set up our compute environment and job queue and job definition template, but we will define the jobs and launch them with some python scripts. We begin with the computer environment. Go to the aws portal, look for the Batch service and go to that page. On the left are the component lists. Select “Compute environments”. Give it a name and make it managed.

Next we will provision it by selecting Fargate and setting the maximum vCPUs to 256.

Finally we need to setup the networking. This is tricky. Note that the portal has created you Batch compute environment in your current default region (as indicated in the upper right corner of the display). In my case it is “Oregon” which is US-west-2. So when you look at your default networking choices it will give you options that exist in your environment for that region as shown below. If none exist, you will need to create them. (A full tutorial on AWS VPC networking is beyond the scope of this tutorial.)

Next, we will create a queue. Select “Job queues” from the menu on the left and push the orange “Create” button. We give our new queue a name and a priority. We only have one queue so it will have Priority 1.

Next we need to bind the queue to our compute environment.

Once we have a job queue, compute environment now all we need from the portal is a job definition. We give it a name, say it is a Fargate job. Specify a retry number and a timeout number.

We next specify a container image to load. You can use Docker hub containers or AWS elastic container registry service images. In this case we use the latter. To create and save an image in the ECR, you only need to go to the ECR service, create a repository. In this case our container is called “dopi”. That step will give you the full name of the image. Save it. Next when build the docker image, you can tag it and push it as follows.

docker build -t=”dbgannon/dopi” .

docker tag dbgannon/dopi:latest 066301190734.dkr.ecr.us-west-2.amazonaws.com/dopi:latest

docker push 066301190734.dkr.ecr.us-west-2.amazonaws.com/dopi:latest

We can next provide a command line to give to the container, but we won’t do that here because we will provide that in the final job step.

There is one final important step. We set the number of vCPUs our task needs (1) and the memory (2GB) and the execution role and Fargate version. Our experience with Fargate shows version 1.3 is more reliable, but if you configure your network in exactly the right way, version 1.4 works as well.

Defining and Submitting Jobs

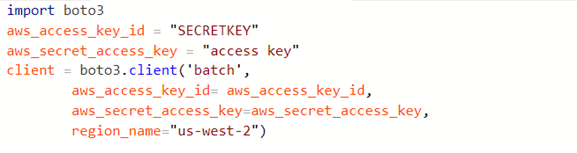

In our example the container runs a program that writes and reads files to AWS S3. To do that the container must have the authority to access S3. There are two ways to do this. One is to use the AWS IAM Service to create a role for s3 access that can be provided to the Elastic Container Service. The other, somewhat less secure, method is to simply pass our secret keys to the container which can use them for the duration of the Task. The first thing we need is a client object for Batch. The following code we can execute from our laptop. (note: if you have your keys stored in a .aws directory, then the key parameters are not needed.)

Defining and managing the jobs we submit to batch is much easier if we use the AWS Boto3 Python API. The function below wraps the submit job function from the API.

It takes your assigned jobname string, the name of the jobqueue, the JobDefinition name and two lists:

- The command string list to pass the container,

- The IDs of the jobs that this job depends upon completion.

It also has defaults for the memory size, the number of retries and duration in seconds.

In our example we have two containers, “dopi” and “dopisecond”. The first container invokes “dopi.py” with three arguments: the two AWS keys and a file name “FileXx.txt”. The second invokes “dopi2.py” with the same arguments plus the jobname string. The second waits for the first to terminate and then it reads the file and modifies it saves it under a new name. We invoke the first and two copies of the second as follows.

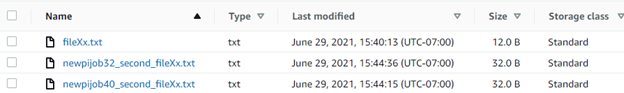

At termination we see the S3 bucket contains three files.

Running MPI jobs with the Batch Scheduler

Running MPI parallel job on AWS with the Batch scheduler is actually easier than running the Batch workflows. The following is based on the blog Running an MPI job with AWS ParallelCluster and awsbatch scheduler – AWS ParallelCluster (amazon.com). We use the Parallel cluster command pcluster to create a cluster and configure it. The cluster will consist of a head node and two worker nodes. We next log into the head node with ssh and run our MPI job in a manner that is familiar to anyone who has used mpirun on a supercomputer or cluster.

To run the pcluster command we need a configuration file that describes a cluster template and a virtual private cloud vpc network configuration. Because we are going to run a simple demo we will take very simple network and compute node configurations. The config file is shown below.

There are five parts to the configuration file, the region specification, a global segment that just points to the cluster spec. The cluster spec wants the name of an ssh keypair, the scheduler to use (in our case that is batch), an instance type and the base OS for the VM (we use alinux2 because it has all the mpi libraries we need), a pointer to the network details and the number of compute nodes (we used 2). The command to build a cluster with this configuration and named tutor2 is

pcluster create -c ./my_config_file.config -t awsbatch tutor2

This will take about 10 minutes to complete. You can track the progress by going to the ec2 portal. You should eventually see the master node running. You will notice that when this is complete that it has created a Batch jobqueue and an associated ec2 Batch compute environment and job definitions.

Then log into the head node with

pcluster ssh tutor2 -i C:/Users/your-home/.ssh/key-batch.pem

Once there you need to edit .bashrc to add an alias.

alias python=’/usr/bin/python3.7′

Then do

. ~/.bashrc

We now need to add two file to the head node. One is a shell script that can compile a MPI C program and then launch it with mpirun and the other is an MPI C program. These files are in the github archive for this chapter. You can send them to the head node with scp or simply load the files into a local editor on your machine and then paste them to the head node with

cat > submit_mpi.sh

cat >/shared/ mpi_hello_world.sh

Note that the submit script wants to find the C program in the /shared directory. The hello world program is identical to the one we used in the Azure batch MPI example. The only interesting part is where we pass a number from one node to the next and then do a reduce operation.

The submit_mpi.sh shell script sets up various alias and does other housekeeping tasks, however the main content items are the compile and execute steps.

To compile and run this we execute on the head node:

awsbsub -n 3 -cf submit_mpi.sh

The batch job ID is returned from the submit invocation. Looking at the ec2 console we see

The micro node is the head node. There was an m4 general worker which was created with the head but it is not needed any more so it has been terminated. Three additional c4.large nodes have been created to run the MPI computation.

The job id is of the form of a long string like c5e8f53f-618d-46ca-85a5-df3919c1c7ee.

You can check on its status from either the Batch console or from the head node with

awsbstat c5e8f53f-618d-46ca-85a5-df3919c1c7ee

To see the output do

awsbout c5e8f53f-618d-46ca-85a5-df3919c1c7ee#0

The result is shown below. You will notice that there are 6 processes running but we only have 3 nodes. That is because we specified 2 virtual cpus per node in our original configuration file.

Comparing Azure Batch to AWS Batch.

In our previous post we described Azure Batch and, in the paragraphs above, we illustrated AWS Batch. Both batch systems provide ways to automate workflows organized as DAGs and both provide support for parallel programming with MPI. To dig a bit deeper, we can compare them along various technical dimensions.

Compute cluster management.

Defining a cluster of VMs is very similar in both Azure Batch and AWS Batch. As we have shown in our examples both can be created and invoked from straight forward Python functions. One major difference is that AWS Batch supports not only standard VMs (EC2) but also their serverless container platform Fargate. In AW Batch you can have multiple Compute clusters and each compute cluster has its own Job queue. Job queues can have priority levels assigned and when tasks are created, they must be assigned to a job queue. Azure Batch does not have a concept of job queue.

In both cases, the scheduler will place all tasks that do not depend on another in the ready-to-run state.

Task creation

In Azure Batch tasks are encapsulated windows or linux scripts or program executables and collections of tasks are associated with objects called jobs. In Azure Batch binary executables are loaded into a Batch resource called Applications. Then when a task is deployed the executable is pulled into the VM before it is needed.

In AWS Batch, tasks are normally associated with Docker containers. This means that when a task is executed it must first pull the container from the hub (Docker hub or AWS container registry). The container is then passed a command line to execute. Because of the container pull, task execution may be very slow if the actual command is simple and fast. But, because it is a container, you can have a much more complex application stack than a simple program executable. As we demonstrated with the MPI version of AWS Batch it is also possible to have a simple command line, in that case it is mpirun.

Dependency management

In our demo of Azure Batch, we did not illustrate general dependency. That example only illustrated a parallel map operation. However, if we wanted to create a map-reduce graph that can be accomplished by creating a task that is dependent upon the completion of all of the tasks in the map phase. The various versions of dependencies handled are described here.

AWS Batch, as we demonstrated above, also has a simple mechanism to specify that a task is dependent upon the completion of others. AWS Batch also has the concept of Job Queues and the process of defining and submitting a job requires a Job Queue.

MPI parallel job execution

Both Azure Batch and AWS Batch can be used to run MPI parallel jobs. In the AWS case that we illustrated above we used pcluster which deploys all the AWS Batch objects (compute environment, job queue and job descriptions) automatically. The user then invokes the mpirun operation directly from the head node. This step is very natural for the veteran MPI programmer.

In the case of Azure batch the MPI case follows the same pattern of all Azure Batch scripts: first create the pool and wait for the VMS to come alive, then create the job, add the task (which is the mpirun task) and then wait until the job competes.

In terms of ease-of-use, one approach to MPI program execution is not easier then the other. Our demo involves only the most trivial MPI program and we did not experiment with more advanced networking options, or scale testing. While the demos were trivial, the capabilities demonstrated are not. Both platforms have been used by large customers in engineering, energy research, manufacturing and, perhaps most significantly, life sciences.

The code for this project is available at dbgannon/aws-batch (github.com)